A major policy shift unfolded in Washington as US President Donald Trump directed all federal agencies to immediately discontinue the use of Artificial Intelligence technology developed by Anthropic. The move follows an escalating disagreement between the Pentagon and the AI company over the potential military applications of its systems.

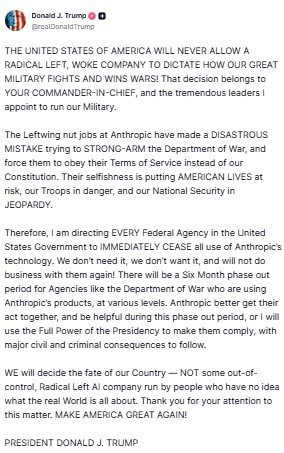

In a statement posted on Truth Social, Trump declared, “I am directing every federal agency in the United States government to immediately cease all use of Anthropic’s technology. We won’t do business with them again because we don’t need or want it.” The directive marks a significant turning point in the federal government’s engagement with one of the nation’s leading AI developers.

Six-Month Phase-Out for Federal Agencies

While the order mandates an immediate halt to new engagements, the President acknowledged that certain agencies – particularly the Defense Department – currently rely on Anthropic’s tools at multiple operational levels. To address this, the administration has established a six-month transition window to allow affected departments to phase out the technology.

The phased withdrawal is intended to ensure continuity of operations while alternative AI systems are identified and deployed. However, the warning accompanying the directive was firm: failure to comply during the transition could result in serious civil and criminal consequences.

| Key Directive | Details |

|---|---|

| Immediate Action | All federal agencies ordered to stop using Anthropic technology. |

| Transition Period | Six-month phase-out for agencies currently utilizing Anthropic systems. |

| National Security Rationale | Administration claims AI restrictions could limit military operational autonomy. |

| Potential Penalties | Civil and criminal consequences warned if compliance issues arise. |

| Pentagon Position | Contractors prohibited from commercial activity with Anthropic during transition. |

Core Dispute: AI Ethics vs. Military Autonomy

The dispute intensified after Dario Amodei, CEO of Anthropic, stated publicly that the company would not remove safeguards embedded within its Claude AI system. According to Amodei, certain military applications – particularly fully autonomous weapon systems and large-scale domestic surveillance – cross ethical and technological boundaries.

“We cannot in good conscience accede to their request,” Amodei said, emphasizing that while Anthropic supports the responsible use of AI to strengthen US national security, existing AI systems are not sufficiently reliable to operate without human oversight in life-and-death targeting decisions.

The administration, however, framed the disagreement as a question of executive authority and military command. Trump asserted that decisions regarding how the United States military fights and conducts operations must rest exclusively with elected leadership and military commanders, not private technology firms.

Pentagon Declares Anthropic a National Security Risk

In a strong follow-up statement, Defense Secretary Pete Hegseth announced that any contractor, supplier, or partner doing business with the US military would be barred from engaging in commercial activity with Anthropic effective immediately.

Hegseth confirmed that Anthropic would be permitted to continue limited services during the six-month phase-out period to allow what he described as a “seamless transition to a better and more patriotic service.”

He further accused the company and its leadership of attempting to exert undue influence over military operations, calling the firm’s stance “fundamentally incompatible with American principles.” The Defense Secretary insisted that the Department of War must retain full and unrestricted access to AI systems for legitimate defense purposes.

Implications for Federal AI Procurement and Defense Strategy

This directive signals a dramatic shift in the federal government’s approach to artificial intelligence procurement and defense technology partnerships. Anthropic has been regarded as one of the most advanced AI labs in the United States, and its exclusion from federal contracts could reshape the competitive landscape for AI providers working with the government.

Beyond procurement concerns, the controversy highlights a broader national debate over AI ethics, military modernization, and the balance between technological safeguards and strategic autonomy. As the six-month transition unfolds, attention will remain focused on how federal agencies adapt, which AI providers emerge as alternatives, and how future AI governance frameworks are defined.

The confrontation underscores a growing tension between Silicon Valley AI developers and national defense institutions – a tension that may increasingly shape US technology policy, military capability development, and national security doctrine in the years ahead.

For breaking news and live news updates, like us on Facebook or follow us on Twitter and Instagram. Read more on Latest World on thefoxdaily.com.

COMMENTS 0